In this article

In May 2026, a family in California escalated a school-grade dispute into federal court. Their child, a sophomore at a Palo Alto public high school, had written an essay on Arthur Miller's «The Crucible» and turned it in. Turnitin's AI detector flagged the essay at 76% likely to be AI-generated. The teacher, relying on that score, required the student to rewrite the essay in class under supervision. The grade fell from a low A or high B to a C. The family submitted a 1,162-page evidentiary packet of Google Docs revision history showing the work was written by hand over days. The district declined to restore the grade. The case is now pending in the U.S. District Court for the Northern District of California as Doe et al v. Palo Alto Unified School District et al., docket 5:25-cv-04202.

It is one of at least six active lawsuits in 2025–2026 that share a structural problem. ESL writers — students whose first language is not English — and other groups (autistic students, OCD students, students using assistive technologies) are being flagged at far higher rates than the general population. Federal and state courts are starting to take notice. Universities — Waterloo, Vanderbilt, MIT, Curtin — are quietly disabling AI detection. And Turnitin itself, in a February 2026 announcement, said it is shifting «from detection to transparency».

This article is for ESL writers — students, graduate researchers, immigrant professionals — whose work is being read by software that was not built with them in mind. It explains what the research actually shows, what the cases say so far, what universities are doing in response, and how to protect your work before it ever gets flagged.

1. The Stanford finding that started the conversation

In April 2023, a Stanford team led by Weixin Liang and James Zou tested seven commercial AI detectors on essays written by non-native English speakers. The detectors flagged 61.22% of those human-written essays as AI-generated, while correctly recognising US 8th-grade essays as human. The paper appeared in the Cell Press journal Patterns and reframed the entire detection debate.

The researchers took 91 essays written by non-native English speakers (sourced from TOEFL preparation materials) and 88 essays written by US 8th graders. They ran every essay through seven commercial AI detectors: Originality.AI, Quill.org, Sapling, OpenAI's GPT-2 Output Detector, Crossplag, GPTZero, and ZeroGPT. The result:

- 61.22% of the human-written non-native English essays were incorrectly flagged as AI-generated.

- 97.8% of those essays were flagged as AI by at least one of the seven detectors.

- 19.8% of them were flagged unanimously by all seven.

- The same detectors were «near-perfect» at correctly recognising the US 8th-grade essays as human.

To prove the cause, the researchers ran an additional test. They took the verified-human US essays and asked ChatGPT to «simplify» the word choices to sound non-native. The false-positive rate spiked. They took the TOEFL essays and asked ChatGPT to «enhance» the language to sound native. The false-positive rate dropped. The detectors were not finding AI. They were punishing linguistic simplicity.

This is because AI detectors measure two things: perplexity (how predictable each word choice is) and burstiness (how much sentence length and structure vary). Large language models generate low-perplexity, low-burstiness text by design — they pick the most probable next word and produce uniform output. Non-native English writers, especially those still building vocabulary, naturally produce low-perplexity prose. They use safer word choices and standard grammar. To a detector trained on perplexity, that looks like a machine wrote it.

The mechanism is not a bug that can be fixed with a model update. As researchers Hadra, Cambridge, and Mesbah (2026) put it in the International Journal for Educational Integrity (Springer), the trade-off between detection power and false accusations is «a structural mathematical limit, not an engineering flaw that can be patched».

2. What the cases are saying so far

At least six AI-detection lawsuits are active in 2025 and 2026, with mixed results. Where the school's case rests on a detector score alone, courts are increasingly sceptical and one ruling has already called the institutional decision «devoid of reason». Where the detector flag is backed by independent evidence of cheating, judges defer to teachers and administrators.

Newby v. Adelphi University — student won

In January 2026, the New York Supreme Court (Nassau County) ruled in Matter of Newby v. Adelphi University. Orion Newby, a freshman who was diagnosed with Level 2 Autism Spectrum Disorder and enrolled in Adelphi's «Bridges Program» (a learning-support initiative for neurodevelopmental students), had been accused of AI plagiarism after a Turnitin score of 100% on a history paper. The university upheld a failing grade and required him to take an anti-plagiarism course. His family spent over $100,000 in legal fees challenging the decision.

Hon. Randy Sue Marber ruled that the university's decision was, in her exact wording, «without valid basis and devoid of reason». The court ordered Adelphi to rescind the penalty and fully expunge the student's record. It is now considered a landmark ruling because it establishes — at least at the state-court level in New York — that institutions cannot treat a detector score as a final verdict.

Doe v. Yale University — ESL Title VI claim, pending

An Executive MBA student at Yale was suspended for a year after a final exam was flagged by GPTZero. The student, a non-native English speaker proceeding under the pseudonym «John Doe», filed suit in U.S. District Court for the District of Connecticut (docket 3:25-cv-00159-SFR). The complaint argues that GPTZero's algorithm has implicit bias against ESL writers — the exact linguistic mechanism the Stanford study documented — and that using the tool to discipline a non-native speaker amounts to national-origin discrimination under Title VI of the Civil Rights Act of 1964. The case is pending.

R.N.H. v. Hingham Public Schools — student lost the injunction

Not every case is going the family's way. In Massachusetts, the parents of a high school student (identified by initials R.N.H.) sued Hingham Public Schools after the student was disciplined for using AI on a history project. The federal court denied the family's request for an injunction. The judge reasoned that the case was «not about AI… but straightforward academic dishonesty» — because the teacher's investigation had uncovered AI-hallucinated citations in the student's work, including a non-existent book titled «Hoop Dreams: A Century of Basketball» attributed to a non-existent author. The court declined to «second-guess the decisions of teachers and administrators». It is an important counter-case: when a detector flag is combined with independent evidence of cheating, courts are reluctant to overturn the school's call.

What separates the cases is whether the school's case rests on the detector alone (which courts are increasingly skeptical of) or whether the detector flag is corroborated by other evidence (in which case courts defer to educators). The implication for an ESL writer who is doing their own work: the strongest defense is preserved evidence of process — drafts, timestamps, revision history — long before a detector ever sees the final paper.

3. Universities are quietly dropping AI detection

While litigation works through the courts, major universities are turning AI detection off on their own. Waterloo, Vanderbilt, MIT, and Curtin have publicly disabled or restricted Turnitin's AI detector, citing unreliability and bias against non-native English speakers. Many more institutions have switched the feature off in their learning management systems without a press release.

University of Waterloo formally discontinued Turnitin's AI detection feature in September 2025. The Associate Vice-President Academic stated publicly: «Research has shown that AI detection tools are unreliable… AI detection tools have also been found to be biased toward students whose first language is not English… Given the expense of the tool in U.S. dollars, unreliability, and bias, it was determined the costs associated with Turnitin's AI detection feature outweigh the benefits.»

Vanderbilt University disabled Turnitin's AI detector. Their administrative guidance states: «Instances of false accusations of AI usage being leveled against students at other universities have been widely reported… In addition to the false positive issue, AI detectors have been found to be more likely to label text written by non-native English speakers as AI-written.»

MIT published internal teaching guidance titled, in its full bluntness, «AI Detectors Don't Work. Here's What to Do Instead».

Curtin University in Australia announced it will disable Turnitin's AI writing detection starting in 2026 while keeping the standard plagiarism check active.

Several other universities have quietly disabled or restricted AI detection — among them American University, Boston University, UC Berkeley, Colorado State, DePaul, Georgetown, Michigan State, NYU, and University of Cape Town. Most did this without a press release; they let contracts lapse or toggled the feature off in their learning management system.

4. Even Turnitin admits the limits

Turnitin's own materials have a hard-to-find but specific caveat. The company has stated that its AI indicator has «an uncertainty band of roughly plus or minus 15 percentage points» depending on the length of the text. The same documentation says the indicator «should not be the sole basis for punitive action».

The history of Turnitin's accuracy claims is instructive. At the February 2023 launch of the AI detector, the company announced — confidently — that the tool «identifies 97 percent of ChatGPT and GPT3 authored writing, with a very low less than 1/100 false positive rate». Three months later, in a May 2023 blog post, Chief Product Officer Annie Chechitelli acknowledged: «real-world use is yielding different results from our lab». In February 2026, CEO Chris Caren announced a pivot — the company is moving «from detection to transparency», emphasising tools that track the writing process rather than flagging finished documents.

An audit by the University of Waterloo, before they disabled the tool, found that «in more than one instance the product flagged human-written text as 100% generated by AI». A 2026 peer-reviewed study by Hadra et al. found Turnitin's overall accuracy at 61%. The Anthology educational-technology group, which evaluated AI detection across its higher-education clients, concluded that «AI detection is not currently fit for purpose in education».

5. What ESL writers should actually do

If you are an ESL writer doing your own work, the legal trends are encouraging but they will not help you in the meeting next Tuesday. What matters in practice is the evidence you have before anyone questions your writing.

Build the proof trail while you are writing

The Palo Alto family submitted 1,162 pages of revision history because they had it. Google Docs preserves a full version history by default — every edit, paste, deletion, and timestamp. So does Microsoft Word, Notion, and most modern editors. Make sure you are writing in something that keeps this history, and do not work in a tool that does not.

If you draft in your native language first and then translate into English (a common, legitimate ESL workflow), keep the original draft. It is some of the most convincing evidence you can offer. A judge or administrator looking at a paper trail that shows «here is my Ukrainian outline, here is the English translation, here are five revisions of the English» is reading the same kind of evidence Diglot's Authorship Certificate makes verifiable by cryptographic signature.

If you are flagged, do not apologise

The first instinct when a teacher asks «did you use AI on this?» is to defend, explain, sometimes over-explain. The lesson from the Hingham case is that protest without evidence does not work; the lesson from the Newby case is that evidence without panic does. Lead with the paper trail. Ask which detector was used and what threshold triggered the flag. Note Turnitin's own ±15 percentage point uncertainty. Reference the Stanford study if you are an ESL writer — it is peer-reviewed, public, and exactly on point.

Know your institution's policy

The cases that win for students share a structural feature: the institution's procedure for handling AI accusations was either absent or vague. The student is then surprised by a penalty that was not in any handbook at the start of the semester. Read your school's academic integrity policy. If it does not address AI specifically, that is an argument worth raising.

Ask about due process

The strongest cases against schools allege procedural due process violations — the institution did not give the student fair notice, a chance to present counter-evidence, or a clear appeal path. If you are accused, ask in writing for the specific procedural steps the institution will follow. That request alone tends to focus the conversation.

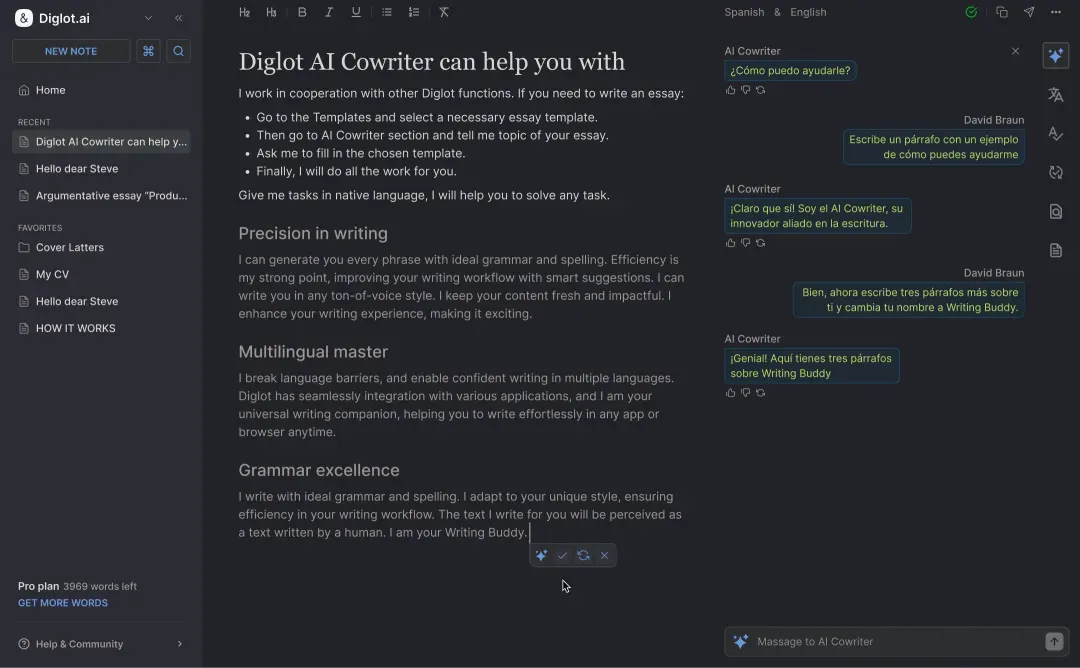

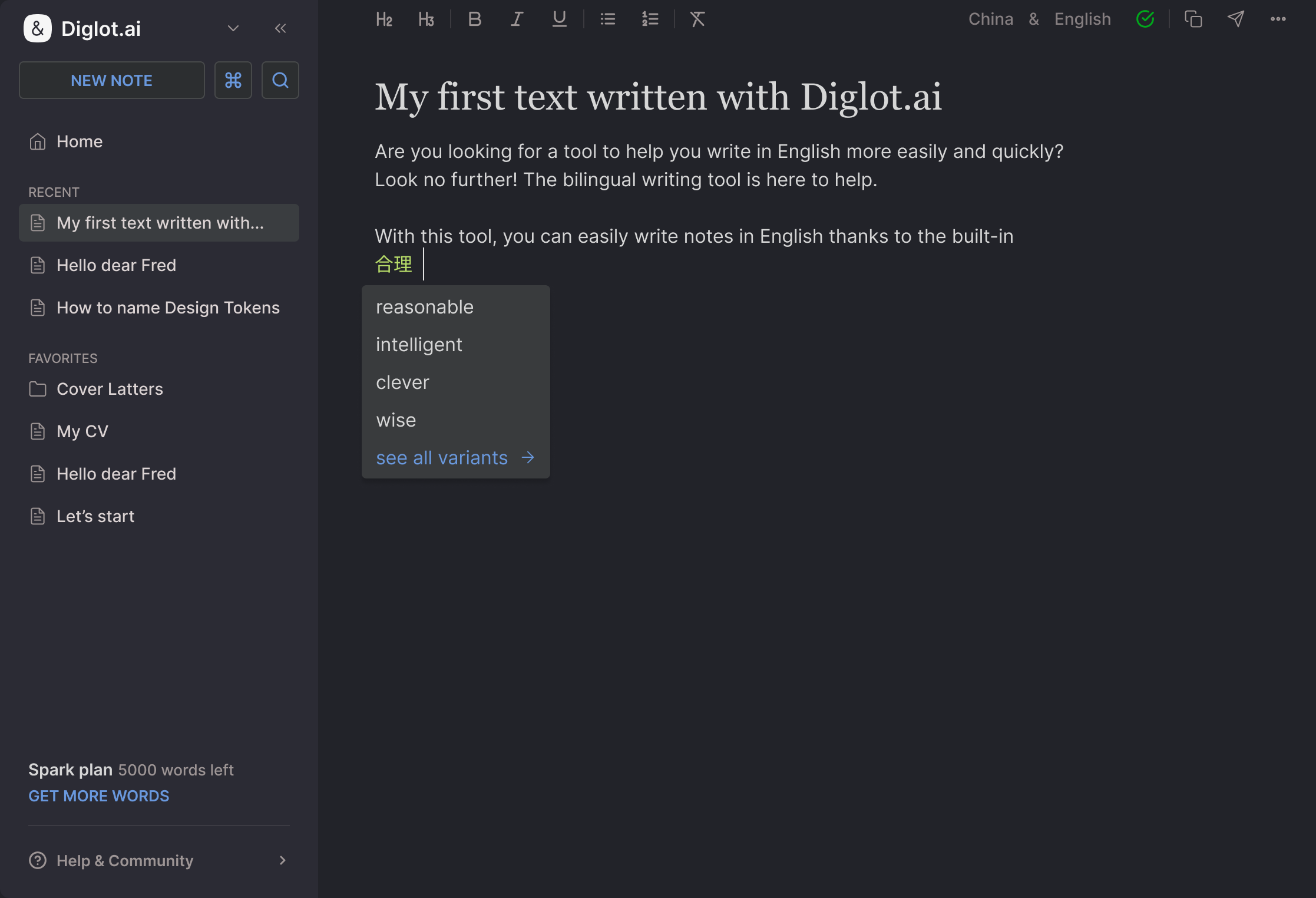

6. Where Diglot fits

Every ESL writer who runs into a false AI flag is, at root, having an evidence problem. Revision history exists in most modern editors, but it is hard to share and easy for an institution to dismiss. Diglot's Authorship Certificate solves that by signing the full edit chain with a cryptographic key and producing a public verification URL.

Diglot tracks every edit, paste, and AI-assist on your document and signs the resulting event chain with an ed25519 cryptographic key. The result is a public verification URL anyone can open in any browser — no Diglot account required — to see a tamper-proof record of how the document was actually written. If you are an ESL writer drafting in your native language and refining in English, Diglot is built for that exact workflow, and the resulting Certificate is built for exactly the conversation this article is about.

If you want to learn more about how the Certificate is signed and how a third party verifies it, read the how it works page. For the research behind why detectors over-flag ESL writing in the first place, read the companion piece why AI detectors misread non-native English. For a practical step-by-step defense guide, read how to prove your essay is human-written. If you are a freelancer dealing with client accusations, see what to do if a client flags your writing as AI. And for tips on writing English that sounds natural (and less flaggable), read how to make your English writing sound natural. Browse the full Authorship Certificate research category for more case studies and policy updates. If you want to try the bilingual writing flow, the free tier covers most use cases.

Sources cited in this article

This article cites peer-reviewed research from Stanford and Springer, court filings and rulings from federal and state courts, public statements from the University of Waterloo and Vanderbilt, Turnitin's own product disclosures, and reporting from Hoodline. Each reference is linked below. Where rulings or institutional positions are still active, we will update this page as material changes land.

- Liang, W., Zou, J., et al. (2023). «GPT detectors are biased against non-native English writers.» Patterns, Cell Press. arxiv.org/abs/2304.02819 · DOI: 10.1016/j.patter.2023.100779

- Hadra, Cambridge, Mesbah (2026). «AI Detectors Fail Diverse Student Populations: A Mathematical Framing of Structural Detection Limits.» International Journal for Educational Integrity, Springer.

- Matter of Newby v. Adelphi University (2026), Hon. Randy Sue Marber, New York Supreme Court (Nassau County): law.justia.com

- Doe et al v. Palo Alto Unified School District et al., docket 5:25-cv-04202, U.S. District Court for the Northern District of California (filed 2025–2026, pending).

- Hoodline, «Palo Alto Parents Go Federal Over Teen's Turnitin 'AI Cheater' Tag» (May 11, 2026): hoodline.com

- University of Waterloo, «Discontinuing the use of AI detection functionality in Turnitin»: uwaterloo.ca

- Vanderbilt University, «Guidance on AI Detection and Why We're Disabling Turnitin's AI Detector»: vanderbilt.edu

- National Education Association, «Five Principles for the Use of Artificial Intelligence in Education» (2025): nea.org

- American Federation of Teachers, «Resolution on Artificial Intelligence»: aft.org

This article reports on active litigation and evolving policy. Specific case statuses and institutional positions may change after publication. We will update this page when material rulings land. Last verified: 2026-05-14.