In this article

Non-native English writers are getting flagged as AI-generated more often than native writers. The cause is not a small calibration error — it is a structural property of how AI detectors work. Detectors look for "low perplexity" — text that follows predictable patterns. ESL writers, taught to follow textbook grammar rules carefully, produce exactly that kind of writing. The cleaner your English, the more it looks like a model wrote it.

This article is for non-native English students and professionals who are doing their own work and getting accused of using AI. It explains why the bias exists, what a plagiarism check actually proves (and what it does not), and how to build an originality defense that holds up under scrutiny.

1. Why AI detectors target non-native English writing

AI detectors flag non-native English at high rates because they measure predictability, not AI involvement. ESL writers, taught to follow textbook grammar carefully, produce low-perplexity prose with steady sentence rhythm. Large language models produce the same shape by design. The detector reads both as «AI-written», even when one is a student who studied for years.

AI detectors do not actually detect AI. They detect predictability. Specifically, they measure two things:

- Perplexity — how surprising each word choice is, given the surrounding context. Low perplexity = predictable = looks AI-generated.

- Burstiness — how much sentence-length variety exists in the text. Low burstiness = uniform sentence rhythm = also looks AI-generated.

Native English writers naturally produce high-perplexity, high-burstiness prose. They use idioms, slang, sentence fragments, and unexpected word choices. They mix three-word sentences with thirty-word ones. AI models — and ESL writers carefully following grammar rules — produce the opposite: clean, regular, "correct" sentences that follow textbook patterns.

The result is a documented bias. A 2023 Stanford study by Liang et al. (published in Patterns, Cell Press) found that GPT detectors flagged over half of essays written by non-native English speakers as AI-generated, while flagging native-written essays at a much lower rate. The gap was not small, and the cause was not the writers cheating. It was the detectors confusing "non-native English" with "AI English."

Inside Diglot's product team we call this experience flagxiety — the constant, low-grade fear that the work you actually did will be dismissed as something a model produced. It is not paranoia. The detectors really are biased against you. Knowing that is the first step in defending yourself.

2. What a plagiarism check actually proves

A plagiarism check proves your text does not overlap an existing source in a large database — a hard, verifiable fact about originality. It does not, by itself, prove human authorship, since paraphrased AI output can still pass an overlap check. But a clean report eliminates the most common cheating scenario and shifts the burden of proof back.

| Tool | What it does | What it cannot do |

|---|---|---|

| Plagiarism checker | Compare your text against a database of existing sources to find overlap. | Tell whether a unique passage was AI-generated or human-written. |

| AI detector | Estimate how predictable the text is, then guess if a model produced it. | Distinguish "predictable because AI" from "predictable because non-native English." |

A plagiarism check is the part of this story that does work reliably. If your essay shows zero overlap against a billion-document database, that is a hard, evidence-based fact. The text is yours. No AI detector verdict can erase that.

This is why a plagiarism check still matters — even in a world where AI detectors get all the attention. Originality is verifiable. AI authorship is, with current tools, not.

3. The defense workflow for non-native writers

A real defense against false AI flags is built before any accusation lands. Run a plagiarism check on every finished draft and keep the report. Preserve revision history in Google Docs or another editor that timestamps every edit. Vary sentence rhythm during the language pass. For high-stakes work, log the writing process with a signed authorship record.

Step 1 — Run a plagiarism check before submission

Run your finished work through a plagiarism checker. If the report shows under 10% overlap (mostly your citations and reference list, which any good tool will identify separately), that is your originality baseline. Save the report. PDF it. Date-stamp it. This is your first piece of evidence.

Step 2 — Keep your draft history

The single strongest defense against an AI accusation is showing your work. Track your draft versions — Google Docs version history works, Notion timestamps work, even committed Git commits work. Multiple drafts with messy intermediate edits are something AI does not produce. A clean single-pass document looks suspicious; a document with thirty visible revisions looks human.

Step 3 — Vary your sentence rhythm during revision

This is the part most ESL writers do not do because it feels like breaking the rules. During the language-pass revision, intentionally introduce sentence-length variation. Mix a four-word sentence in between two longer ones. Use a one-word emphasis. Drop a sentence fragment in dialogue or a casual passage. This is normal native-English rhythm — and it raises your "burstiness" score, which is exactly what AI detectors lower their flag on.

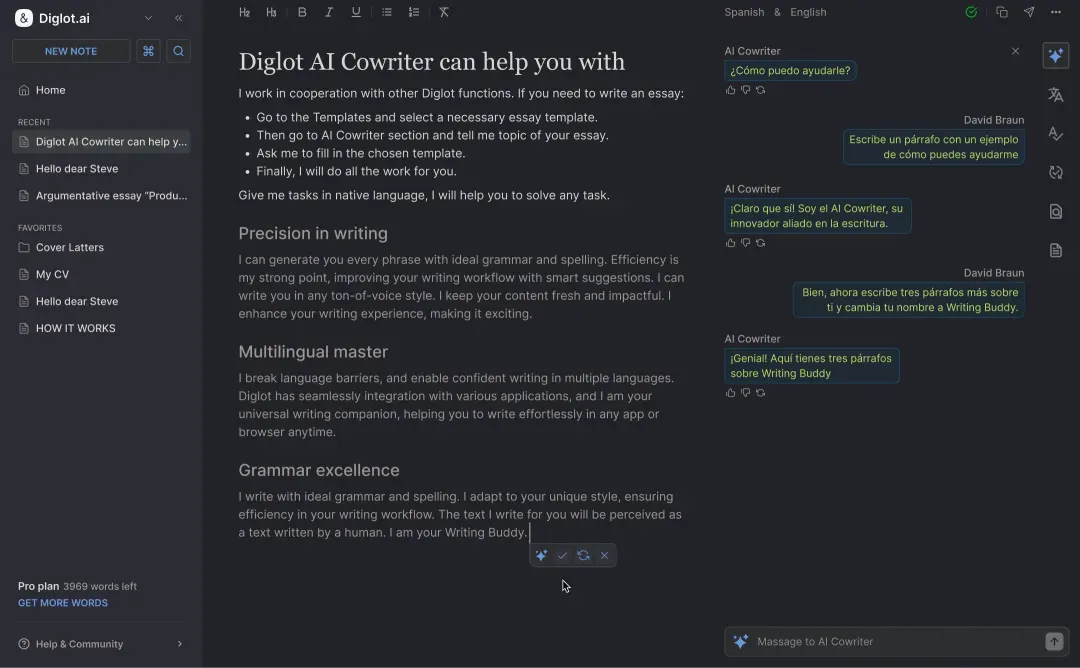

Step 4 — Use authorship logging when stakes are high

For high-stakes documents — admission essays, journal submissions, portfolio pieces — there are now tools that record your writing process as a tamper-evident log. Diglot's Authorship Certificate is one example: it records every edit, paste, and AI-assist as a signed event chain so you can produce verifiable evidence that the document was written, not generated. (We are biased about this one — we built it specifically because of the AI-accusation problem.)

4. What to do if you have already been flagged

If a teacher, professor, or editor has already accused your work of being AI-generated, respond with calm evidence rather than apology. Acknowledge the system flagged the text, then show the draft history, the plagiarism report, and the documented research on detector bias against non-native English writing. Most reasonable instructors update their process once they see the data.

- Acknowledge the concern, not the accusation. "I understand the system flagged this. Let me show you the writing process."

- Show your draft history. Open Google Docs version history or your editor's revision timeline live, in the meeting if possible.

- Show the plagiarism report. Demonstrate that the work is original by source comparison, which is a much harder evidence base than "the AI detector said so."

- Cite the bias. Reference the documented research showing AI detectors have high false-positive rates on non-native English writing. This reframes the conversation from "did you cheat?" to "is this tool reliable for ESL students?"

In our experience working with student users, point 4 is the one that ends the accusation fastest. Most reasonable instructors do not know about the bias yet. Once they do, they update their process — and you have moved the conversation from defending yourself to defending all the ESL students who come after you.

5. The wider problem with detection-based grading

Treating an AI detector score as evidence of cheating is poor policy. The tools are bad at the task, the bias against non-native speakers is documented in peer-reviewed research, and the punishments are severe. Schools that rely on detectors as sole evidence are systematically penalising the students who need the most support, while better process-based assessment exists.

The better approach, increasingly adopted by thoughtful institutions, is process-based assessment: looking at draft histories, in-class writing samples, and oral discussions of the work. These are harder to fake and impossible to bias against ESL students. If you are an instructor reading this, that is the more defensible system.

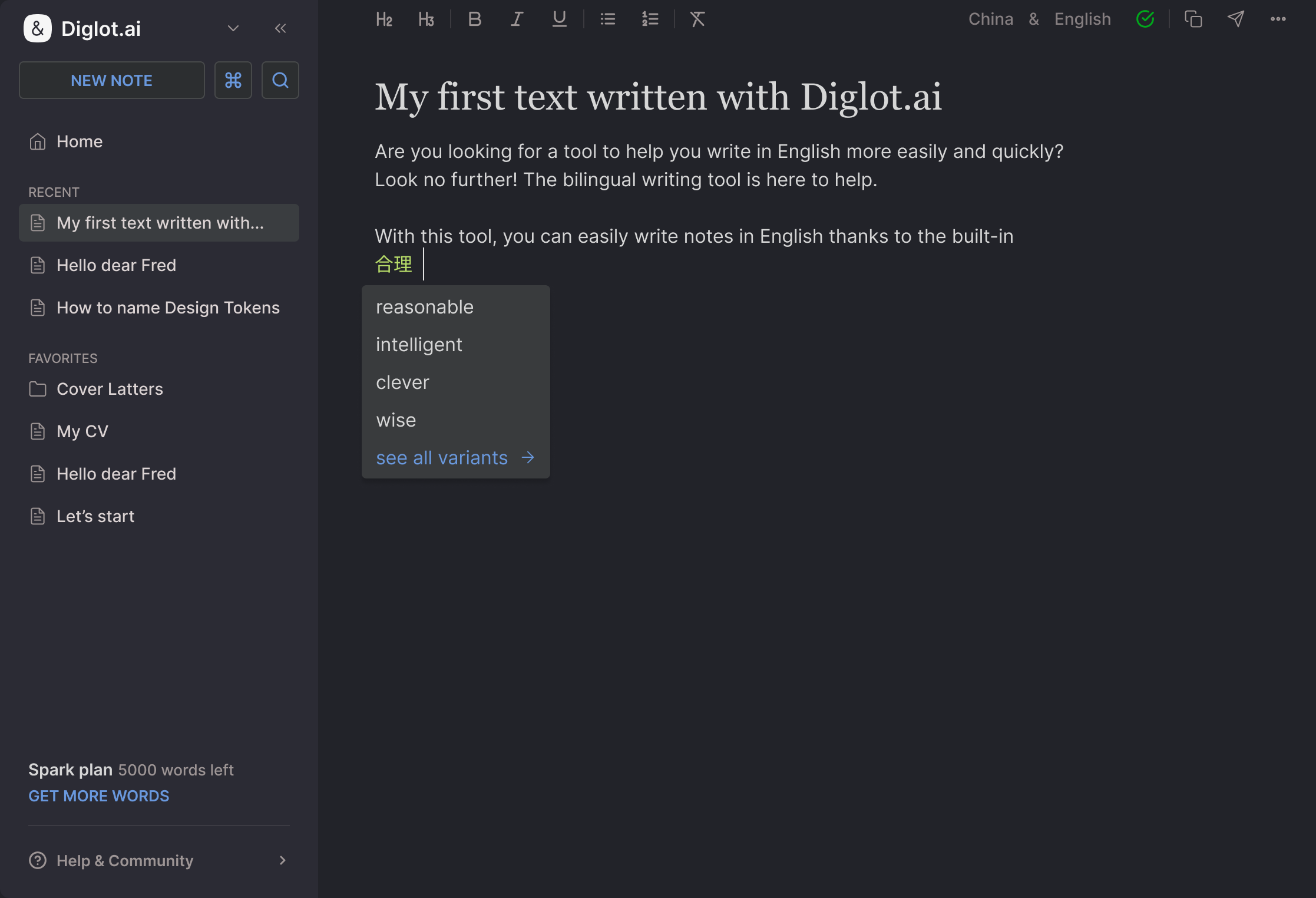

How Diglot fits this

Diglot is built for non-native English writers, and the AI-accusation problem sits at the centre of what we ship. The product covers every step of this defense workflow: a plagiarism checker for the originality baseline, paraphrasing to vary rhythm, L1-aware grammar review that does not flatten your voice, and a signed Authorship Certificate for the work that matters most.

- Plagiarism checker — for the verifiable originality baseline.

- Paraphrasing tool — to introduce natural sentence-rhythm variation when prose feels too uniform.

- Grammar checker — for the language pass, without flattening your voice into "textbook predictable."

- Authorship Certificate — the tamper-evident log of your writing process for high-stakes documents.

For more research on detector bias, court rulings, and what an authorship log actually proves, read the Authorship Certificate research category — including the 2026 update on AI-detection lawsuits that is reshaping how universities use these tools. For a step-by-step defense strategy, read how to prove your essay is human-written. If your writing is being questioned by a client rather than a professor, see what to do if a client says your writing was AI-generated. For tips on making your English sound more natural (and less flaggable), read how to make your English writing sound natural. If you want more on the plagiarism side of this workflow, the plagiarism checker article category covers more guides. If you want the broader bilingual workflow, the ESL writing tool overview is the start.

Final thought

If your English is good, you will be flagged. That is not a moral judgment but a tooling failure. Build the defense before you need it: a plagiarism report, a saved draft history, varied sentence rhythm, and for the work that matters most, an authorship log. These are the new baseline for non-native English writers in 2026.

Your work is yours. Make it provable.